7 Tools to Help Manage your Data Lakes in 2021

Companies have been leveraging big data for a few years now for a variety of purposes. Machine learning, visualizations, and artificial intelligence have unlocked new business opportunities. Due to this, storing massive quantities of data is at the forefront of an organizations’ business needs.

Traditionally, there are different ways to store data depending on the type of data being stored. Structured data (row and column format) is stored in databases. Unstructured data such as videos and images go into another type of storage.

However, the issue with this approach is that as the quantity of data increases every second, managing it separately becomes a nightmare. A consequence of this is that a major portion of this data is rendered unusable as it becomes too expensive to process and gain any meaningful information for the organization. Another implication is that, since data is stored separately, duplication can happen as two or more departments may require the same data set but cannot access the same storage.

This is where data lakes help. A data lake is simply a central data repository. In a data lake, data can be stored directly, whether it is structured or unstructured. It stores data using the object storage model, which provides the major benefit of scalability.

Benefits of Data Lakes

Some of the key advantages that data lakes provide to companies are the following:

They provide easy methods to transform unstructured raw data into structured information ready for SQL querying and other data science/machine learning operations.

They make data management efficient as they remove the need for duplicated data sets and security policies across the organization.

They make data updating fast as they don’t require data to be processed before being stored. The major benefit of this is your company’s data is always up to date.

They make collaboration easy within the organization independent of the skills of users.

Top Tools That Make Data Lake Management More Efficient

While data lakes by themselves provide enough improvements to a company that utilizes big data and the object storage model, the following tools help make the best out of them.

Apache Spark

Apache Spark is an open-source tool that performs SQL operations on big data with speed. It is largely used for projects related to machine learning. It provides high-level operators that allow users to quickly build applications using common data science-related languages such as Java, Python, R, and even SQL.

A widely used data processing tool, Apache Spark boasts high speed and super friendly API, which makes it very appealing to developers.

LakeFS

LakeFS is an open-source data environment tool that allows you to manage object storage-based data lakes. It uses data versioning techniques to build and sustain robust data lake operations for data science and analytics. It is compatible with services such as Amazon S3, Google Cloud Storage, and MinIO. You can also pair it with tools like Apache Spark, Jupyter Notebooks, and Hadoop to upgrade your existing system.

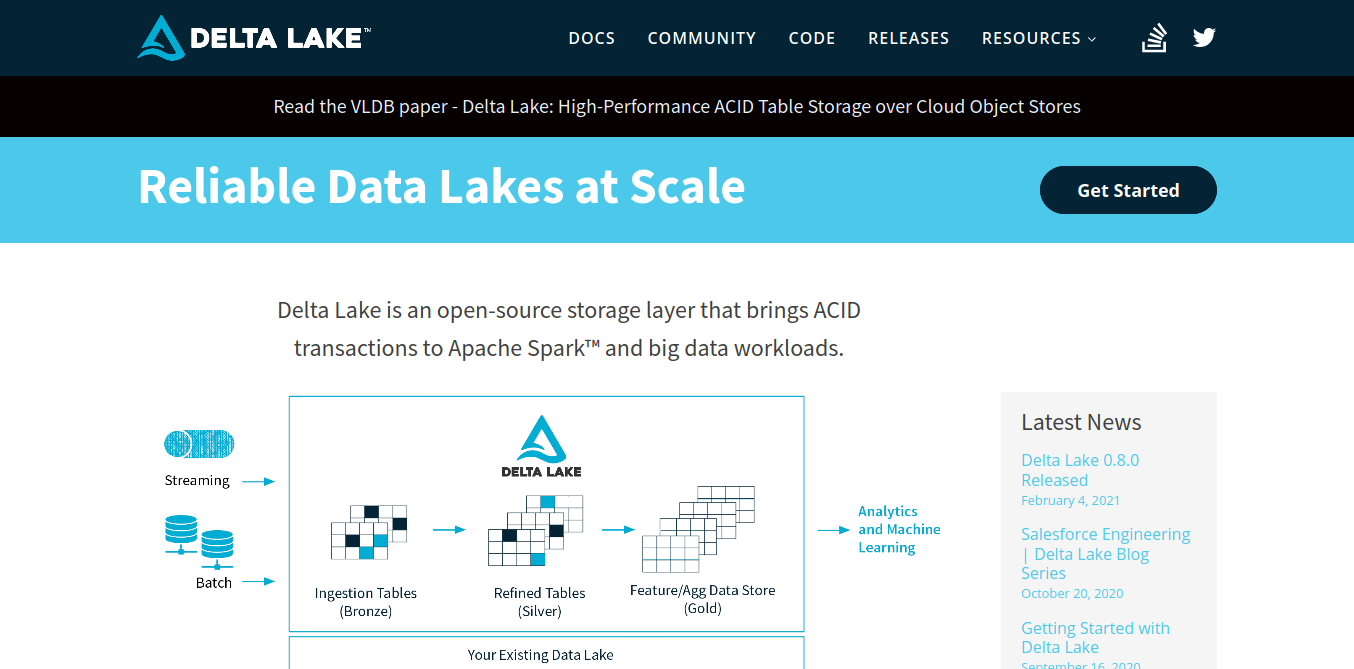

Delta Lake

Delta Lake is an open-source tool that acts as a storage layer. It provides ACID transactions and has a data versioning feature.

The major benefit of using Delta Lake is experienced when your project approaches big data. The ACID transaction feature ensures data integrity. Data snapshots are provided, which benefit developers as they can focus on building and not worry about handling multiple data versions.

Databricks

Databricks is powered by data lakes, which simplifies data analytics, data science, and data engineering by providing a single platform for teams to collaborate on all data, analytics, and AI tasks.

Collaboration is made easy through its data science workspace, which includes collaborative notebooks and a tool for single-click access to machine learning clusters.

AWS Lake Formation

Lake Formation is one of AWS’s major services. It allows for a relatively quick creation of data lakes. Lakes are created by simply defining the sources from which data will come and formulating data access and security policies.

Microsoft Azure Data Lake Storage

Azure Data Lake Storage allows for optimization of your data lake costs with tiered storage and policy management. It also provides highly secure data access and management with its Azure Active Directory and uses role-based access control.

Data consumption and processing are also a breeze with Azure Databricks, Synapse Analytics, and HDInsight. You can also visualize data powerfully with Microsoft Power BI.

Data Version Control (DVC)

As developers, we’re all too familiar with Git; it’s a great tool for collaboration and can be used to version control our codebase. DVC is the data counterpart of Git.

It is an open-source tool that provides a command-line that helps in performing various data version-related tasks. That’s not all; DVC also allows smooth collaboration within and among teams to manage machine learning models and data pipelines.

In Closing

Data lakes surely offer a lot of great things in the age of big data and AI. The tools mentioned are not substitutes for best practices and strategies. The tools are compliments to ensure the implementation of such best practices and strategies. Important aspects such as security and reliability should definitely be the highest priorities.

Cover Photo by Markus Spiske on Unsplash